Building the Public Data Infrastructure to Accelerate Lab-to-Market Commercialization

By Jesse Lou, Rosie Keller, Teasha Feldman-Fitzthum

Every year, thousands of scientists make the leap from research lab to startup. They carry breakthrough technologies and deep technical conviction — but most have never built a financial model. They're expected to lead teams, make go/no-go decisions, raise capital, and build companies. The question they're rarely equipped to answer is the one that matters most: can this technology work commercially?

The tool that should anchor those decisions is techno-economic analysis: a structured way to evaluate commercial viability, understand what drives cost, identify where uncertainty lives, and connect R&D choices to commercial outcomes. When scientists build a TEA, they develop the intuition they need to navigate the hardest decisions in commercialization.

The Problem

The gap isn't just a problem for individual teams. It's a systemic failure at ecosystem scale.

There are roughly

Before Google Maps & GPS, every driver navigated independently — buying paper maps or printing out MapQuest directions, asking strangers for directions, making expensive wrong turns. Routes existed but weren't widely accessible. What was missing was a shared, adaptive navigation layer to make them useful.

Climate commercialization is at the same inflection point. Scientists are developing breakthrough technologies that must be economically feasible to scale. Industrial data exists but is opaque. What's missing is the shared analytical infrastructure: the map layers, navigation tools, and local knowledge that helps scientists find their path to market.

Why Teams Get Stuck

Over 1,500 hours of coaching early-stage teams, we've observed a consistent set of patterns that explain why TEA adoption remains so low — even among teams that understand its importance.

TEA is inaccessible

TEA has a reputation problem. It's seen as a complex, high-precision academic exercise requiring specialized software, months of effort, and niche expertise. When a scientist opens a standard TEA template and sees fifteen tabs of hardcoded assumptions and unfamiliar acronyms, many close it and go back to the lab. The people who need it most face the steepest cold-start problem, and the analysis is inherently idiosyncratic — every technology requires its own tailored approach, which makes generic templates feel both overwhelming and insufficient.

Trust is hard to build

Even when teams get started, they struggle to build enough confidence in their analysis to actually make decisions from it. The issue runs on two levels: trusting that the model's structure is sound, and trusting the underlying input data. Without confidence in their assumptions, teams hesitate to commit to the analysis — and without that commitment, they never take the steps that matter most: internalizing sensitivities, identifying the R&D bets that actually move the needle, and communicating their economics with conviction.

The data bottleneck eats months

A working draft TEA takes 20–25 focused hours. But teams routinely spend months getting there, because every assumption becomes a mini research project. What does a commercial-scale electrolyzer actually cost? How is carbon capture typically structured at this process stage? Is this yield assumption reasonable, or am I off by an order of magnitude? Each question can mean days of searching across scattered reports, academic papers, and vendor quotes — often arriving at ranges so wide they undermine confidence in the whole exercise.

Those hours spent hunting for data don't compound. A founder who spends three weeks tracking down electrolyzer cost ranges hasn't learned anything about their own technology — they've just removed a blocker. The real learning happens in model building: understanding how processes connect, stress-testing assumptions, running sensitivities. The data search is pure overhead.

Massive redundancy at ecosystem scale

The waste compounds across the ecosystem. A team in Cambridge and a team in Singapore independently spend weeks hunting down the same data point. An expert could gut-check that figure in five minutes — they know which sources to trust, what ranges are reasonable, and when something is off. When that validation happens once and is made accessible, it serves thousands of teams. The leverage is enormous, but only if it's coordinated.

Individualized help works, but doesn't scale

We've seen what happens when teams get personalized support through our past services work. Like driving school vs taking an Uber, when teams get behind the wheel and learn to merge onto highways, the synapses connect in their brains. When founders build the model with their own hands, they develop a deep understanding that also helps them accept hard truths about their economics — and empowers them to course-correct as their technology and market evolve.

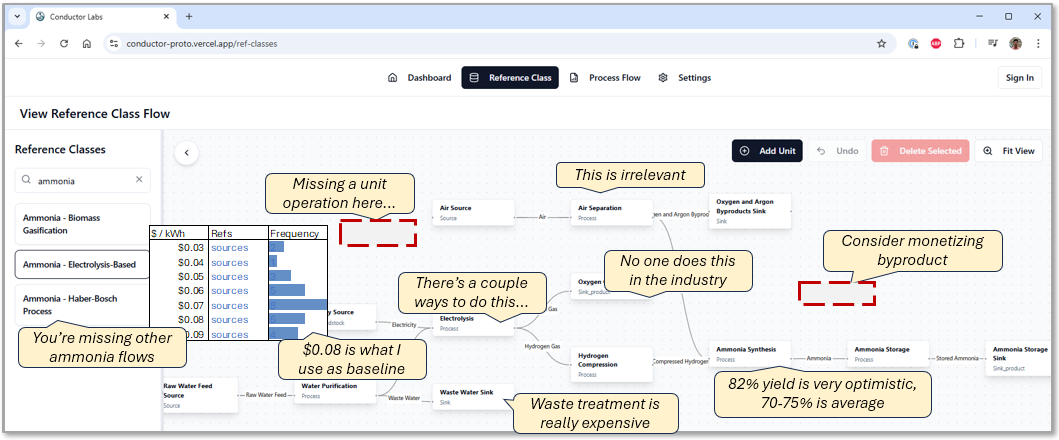

But one-on-one coaching relies on expert time and is impossible to scale. Together with our TEA practitioner network, we've collectively seen hundreds of teams and have identified common bottlenecks: finding strong assumptions, uncertainties about model outputs, modeling the right level of abstraction. The patterns are consistent enough that shared resources and infrastructure could solve a large fraction of the problem — if they existed.

Our Thesis

There is no shortage of people who want to help. Experienced practitioners, accelerator mentors, program managers at national labs — they exist, they're engaged, and many are actively looking for better ways to contribute. But their efforts are diffuse. An expert answers the same assumption question for the fifth time. A mentor coaches a founder through a model they don't have good data for. A program manager watches cohort after cohort hit the same wall. The opportunity isn't finding more people who care — it's concentrating the efforts of the ones who already do.

AI makes the scalable navigation layer possible for the first time. LLMs can deliver personalized, adaptive guidance at a fraction of the cost of expert time — meeting each team where they are, adapting to their specific technology and context. It is now technically feasible to give every climate scientist access to expert-level TEA support through software.

But software alone isn't sufficient. Without validated industrial data underneath it, AI produces plausible-sounding assumptions that quietly lead teams in the wrong direction. And without humans in the loop — practitioners validating the data, mentors providing ground-truth judgment — the system can't earn the trust it needs to change behavior.

The TEA Commons is built around three mutually reinforcing pillars:

- Validated Data as the map layers that make every analysis trustworthy.

- AI-Enabled Tooling as the turn-by-turn navigation that guides teams through the process.

- And a Human Network of practitioners and partners — the locals who know the roads and keep the maps honest, whose leverage is multiplied by good infrastructure.

What We're Building

Pillar 1: Data — The Map Layers

The industrial data repository covers all major climate-relevant industries, organized by reference class: the established and emerging industrial processes that breakthrough technologies slot into, displace, or recombine. The scope is roughly 20 industry verticals — hydrogen, batteries, carbon capture, biofuels, critical minerals, among others — each containing 30–40 sub-industries or process configurations.

Within each, we collect the structural, process, and economic data a founding team needs: raw material costs, capex benchmarks, performance ranges, product specifications, and carbon intensity.

For each data point, we target rough accuracy: ±30% ranges aggregated from public sources and validated by domain experts. This is the precision early-stage teams actually need for directional decisions — enough to identify which parameters drive outcomes, where to focus R&D, and what questions deserve more scrutiny. It's also fast for experts to provide without running into proprietary constraints, which makes the contribution model viable at scale.

In aggregate, the repository will contain millions of data points, continuously improved as users submit feedback and experts refine validation. The 20% of data that unlocks 80% of the value gets built first — the highest-impact reference classes that recur across teams and verticals deliver substantial value long before the full repository is complete.

Pillar 2: Tooling — Turn-by-Turn Navigation

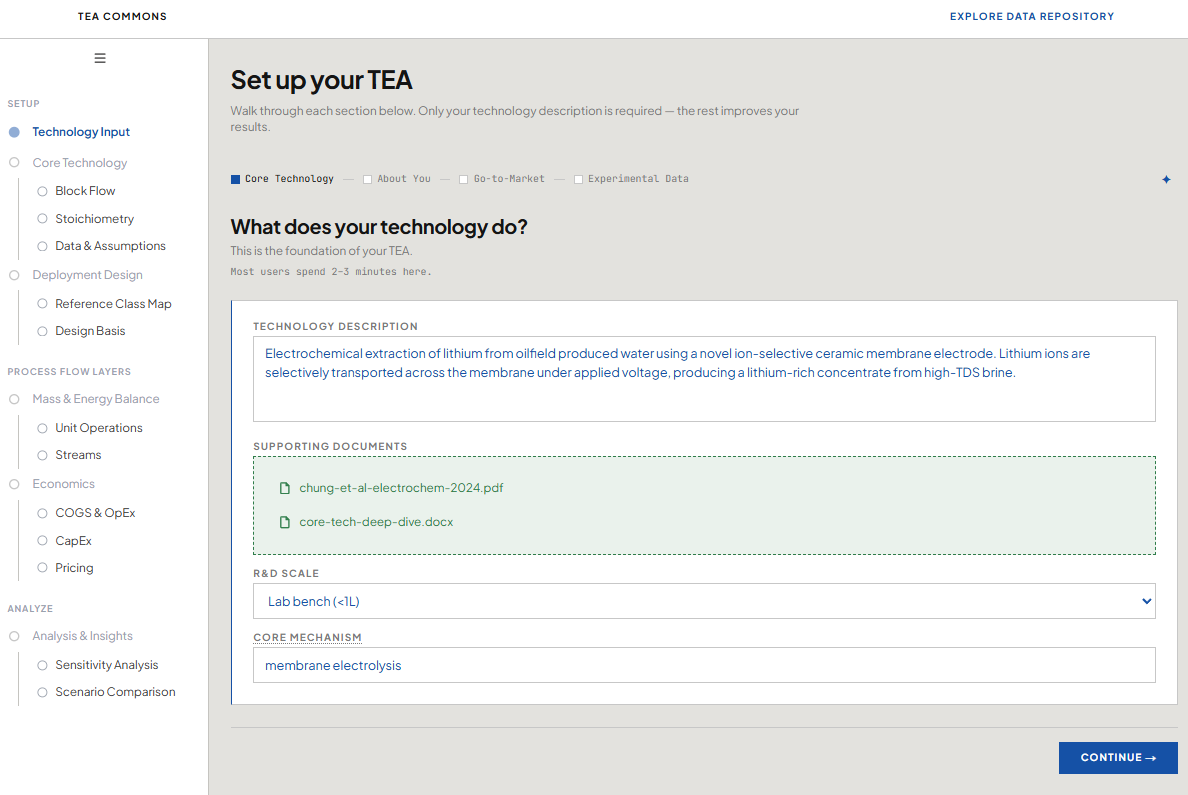

The AI co-pilot guides teams from a blank sheet to a working model — mapping process flows, surfacing relevant data from the repository, walking through a step-by-step framework tailored for early-stage teams, and flagging where assumptions need scrutiny. It adapts to each team's technology and stage rather than forcing them through a generic template.

The goal is a founder who deeply understands their own model, not one who received an answer from a black box. The teams that get the most value from TEA are the ones who built it themselves, who know what's in every cell and why. The tooling creates productive friction — the AI as a thinking partner, working through the analysis alongside the founder rather than ahead of them.

Underneath the co-pilot sits a TEA calculation engine — a core analytical layer that ensures numerical rigor independent of the LLM. This engine is also useful beyond individual team TEAs: it can support IP evaluation, white space identification, and portfolio-level analysis for investors and program managers.

Pillar 3: Network — The Locals Who Know the Roads

Even the best navigation system benefits from people on the ground. The Network pillar supports two inter-related roles.

Topical experts — TEA practitioners and industry veterans — are the validation backbone of the data repository. An electrochemist who has designed commercial-scale PEM systems can gut-check an electrolyzer capex assumption in minutes and assign a confidence score that makes that data point useful for thousands of teams. These experts also make themselves available for targeted consultations, providing the kind of judgment no database can fully encode.

Boots on the ground — accelerator mentors, research program managers, lab ecosystem teams — are the human-in-the-loop for early-stage founders. They know their teams deeply and are often the first call when something feels off. The TEA Commons gives these partners better infrastructure: validated data to reference, tooling to point founders toward, and a community where questions get answered.

Why It Matters

A TEA is the substrate of an early-stage climate company — the analytical backbone that drives internal decisions and shapes every external conversation a founder has.

TEAs travel. They go into pitch decks and data rooms that help investors decide where to place bets. They inform program officers choosing which white spaces to fund. They shape partnership conversations with customers assessing adoption risk. When the underlying analysis is sound and a founder can show their work, every downstream interaction improves — and those interactions move capital, talent, and policy in ways that compound far beyond any individual team.

Building data and tooling as public goods also lays the foundation for something that doesn't yet exist: a shared standard for how early-stage climate economics get communicated. When innovators, investors, incumbents, and policymakers work from a common analytical language, the friction between them drops — and the pace of deployment accelerates.

The Flywheel

If the data and tooling deliver real value, teams will find them organically and programs will push their cohorts to adopt. As adoption grows around a shared data baseline, experts and incumbents become incentivized to participate — contributing data and validation in exchange for exposure to a higher-quality early-stage pipeline.

Over time, the TEA Commons becomes an enabling layer for the entire ecosystem. Companies and policymakers can build internal tools on top of shared infrastructure for their own analysis. The same self-reinforcing dynamic that made open data infrastructure like PubChem foundational to modern biology can work here — each cycle of contribution and use makes the commons more valuable, drawing in the next wave of participants.

Why Now, and Why This Team

The data collection challenge is operational, not research. It doesn't require new AI breakthroughs — it requires sustained, well-coordinated execution across a coalition of domain partners. We can start delivering value now, with the highest-priority reference classes, while the full repository is built out.

Our team brings direct experience across every dimension this effort requires.

- On TEA, we've worked across the full lab-to-market lifecycle — from pre-spinout research teams to late-stage project finance — including three years leading fusion economics at Commonwealth Fusion Systems and over 1,500 hours of hands-on TEA coaching.

- On early-stage support, we've scaled dozens of entrepreneur support programs across Asia and Africa to hundreds of founders through Seedstars, and bring deep experience from Breakthrough Energy Fellows.

- On software and AI, our backgrounds include MIT CSAIL research commercialized into ML-based wind resource assessment (acquired) and building AI for actuarial modeling at Cyence.

- And across industrials, we bring pattern recognition from McKinsey (oil & gas, chemicals, mining, infrastructure), Breakthrough Energy Fellows, and ARPA-E (blue-tech and CDR ecosystem mapping).

We already have a coalition of 50+ partners across academia, government, accelerators, and investors — including Breakthrough Energy Fellows, MIT, Undaunted (Imperial College), Oxford, Homeworld, and FedTech — and an active pipeline of organizations ready to join.

The Investment

We are raising an estimated $8–10M in philanthropic funding and forming partnerships to coordinate a coalition-wide build of the industrial data repository and AI-enabled tooling.

This investment increases the leverage of over $100B in global climate R&D and private capital, accelerates commercialization of technologies representing a significant share of 2050's potential abatement, and builds the analytical public good the entire ecosystem can build on. Our approach is to build the highest-impact reference classes and data first — delivering substantial value early while the full infrastructure scales.

Join Us

The TEA Commons is designed to be accessible to every climate scientist, every research institution, every accelerator mentor — regardless of geography, stage, or resources.

Funders: We're seeking philanthropic capital to fund the data build-out and tooling development — an investment in public goods that increases the leverage of every downstream dollar spent on climate innovation. If you see the opportunity in building shared analytical infrastructure, we'd love to talk.

Research institutions and ecosystem enablers: We're looking for pilot partners who will push their teams to adopt TEA and contribute domain expertise for specific verticals. If you support early-stage climate teams through accelerators, incubators, or lab-to-market programs, there are concrete ways to work together.

Practitioners and domain experts: The data repository is only as good as the people who validate it. If you're a TEA practitioner or industry veteran who believes in this field, we're building a community for you — one where your expertise reaches thousands of teams instead of a handful.

Industry and incumbents: Your operational data and process knowledge can dramatically accelerate the quality of the repository. In return, a stronger analytical baseline across the ecosystem means a higher-quality pipeline of technologies and teams reaching your door.

We'd be grateful to have you alongside us.